AI Found a 27-Year-Old Vulnerability: What Project Glasswing Means for Enterprise Security

AI is uncovering zero-day vulnerabilities at scale. Project Glasswing shows what this means for enterprise security and vulnerability management.

The window between vulnerability disclosure and exploitation is shrinking — rapidly.

In some cases, it's no longer measured in weeks or days, but hours. In tightly targeted environments, potentially even less.

That's not speculation. The cost of discovering exploitable vulnerabilities is dropping, and the speed at which they can be operationalized is increasing.

Elia Zaitsev, CTO of CrowdStrike — a company that, to be fair, has seen some things — put it bluntly: the gap between discovery and exploitation is collapsing.

Project Glasswing is one of the clearest signals yet of why that's happening — and why existing security assumptions are starting to break down.

Project Glasswing Explained: What It Means for Enterprise Security

On April 7, 2026, Anthropic announced Project Glasswing, a collaboration with major industry players including Amazon Web Services, Microsoft, Google, Apple, and the Linux Foundation.

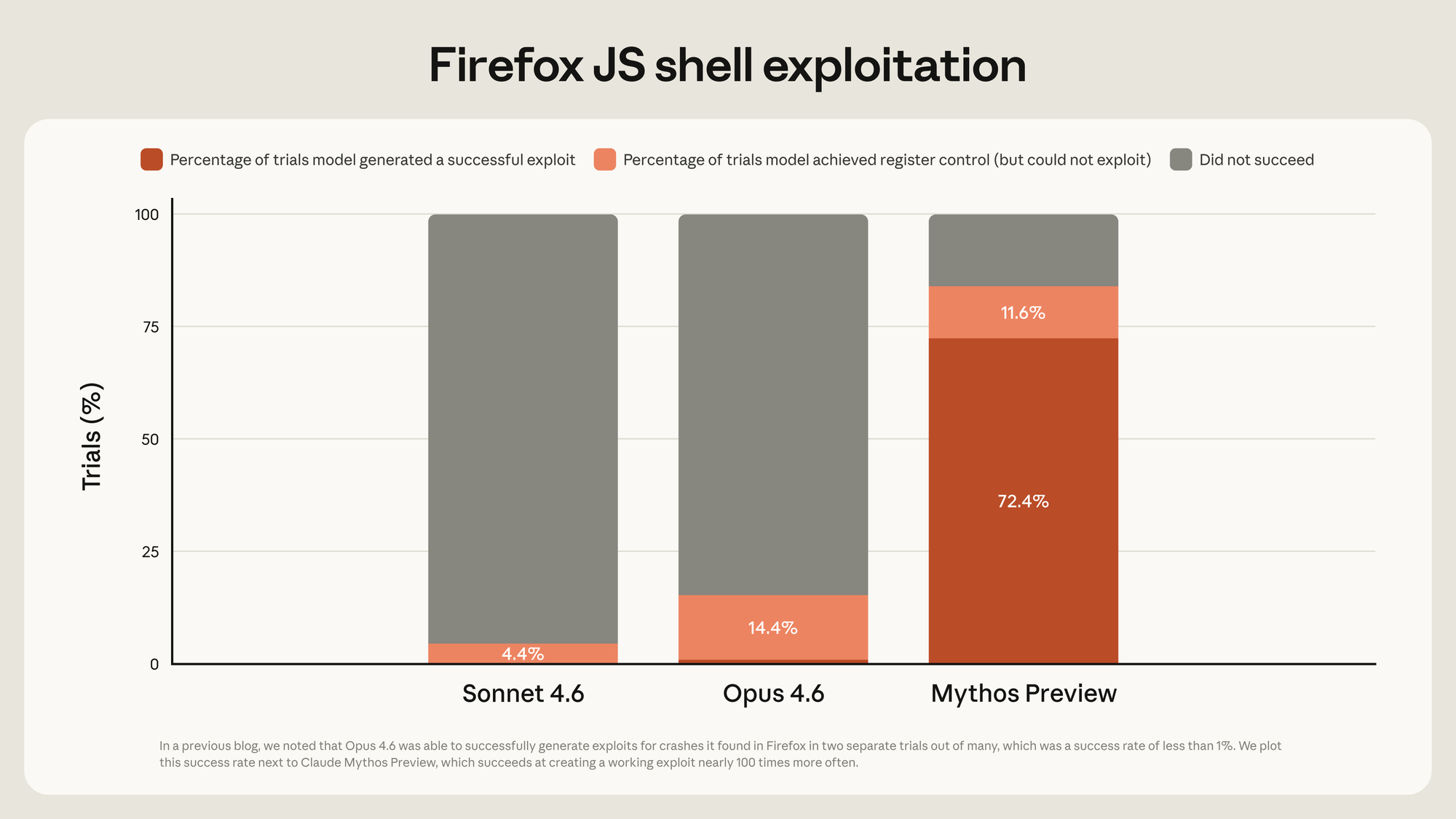

The initiative centers on a restricted model, Claude Mythos Preview, designed to identify vulnerabilities across widely used software systems.

According to Anthropic's published results, the model was able to uncover previously unknown vulnerabilities in operating systems, browsers, and widely deployed open-source libraries. These include:

- A 27 year old bug in OpenBSD that allowed a remote attacker to crash a system by sending a specially crafted packet — patched March 25, 2026

- A vulnerability in FFmpeg that persisted despite extensive automated testing

- Multi-step exploit chains involving the Linux kernel

These findings come from Anthropic's internal evaluations and partner testing, and while they require broader independent validation, they point to a meaningful shift: vulnerability discovery is becoming far less constrained by human time and expertise.

What matters isn't just that these vulnerabilities exist. It's that a single system can now surface them systematically, across codebases that have historically resisted both automated tools and manual review.

That changes the economics of security.

One detail that's easy to overlook: this is the same Anthropic that accidentally shipped a .map file in an npm package and exposed 512,000 lines of their own source code. Apparently when you're busy finding 27-year-old vulnerabilities in other people's code, your own .npmignore file becomes a lower priority.

Why AI Is Exposing Hidden Vulnerabilities in Your Open-Source Stack

Here's a fun thought experiment. Think about every open-source library your product depends on. Now think about who's maintaining those libraries. In many cases the answer is: two developers, a volunteer in the Netherlands, and someone who hasn't committed since 2019.

For decades, those codebases have been reasonably secure — not because they were well-audited, but because finding vulnerabilities in them required rare expertise and significant time. Security through scarcity, essentially.

That era is over.

The FFmpeg example says it all. A vulnerability sat in that codebase for 16 years. Automated tools hit the vulnerable code path five million times without catching it. Human reviewers looked at it repeatedly. Nobody found it.

Mythos Preview found it.

This isn't about FFmpeg being badly maintained. It's about the cost of vulnerability detection collapsing so dramatically that what was previously undetectable is now a compute problem. And the same AI capabilities defenders are using today will be in the hands of attackers tomorrow. As Jim Zemlin, CEO of the Linux Foundation, put it: "We are in the most dangerous period, the transition when attackers might gain a significant advantage as the technology ecosystem digests the impact of AI."

How AI Has Outpaced Enterprise Security Tooling

CTOs have spent years and significant budget on security tooling — SAST scanners, DAST frameworks, dependency databases, penetration testing. These tools are good. They catch real things.

But they were built for a world where the bottleneck was human expert time. Hire a penetration tester, give them two weeks, see what they find. That was the state of the art.

Most enterprises will respond to this moment by buying more of those same tools.

That's the wrong move.

Frontier AI changes the constraint entirely. The bottleneck is no longer human availability — it's computational cost and model access. Which means vulnerability discovery is no longer episodic. Exploit sophistication is no longer bounded by what one human can chain together. And your attack surface isn't just your code anymore — it's every dependency, every transitive dependency, every piece of infrastructure your software touches.

Your current tooling will catch some of this. It will not catch all of it. Cisco's Anthony Grieco said it plainly in their Glasswing announcement: the old ways of hardening systems are no longer sufficient. Which is a very polite way of saying: the threat model changed while you were renewing your existing contracts.

Platform Choice Just Became a Security Decision

Here's the detail most coverage of Glasswing misses.

Claude Mythos Preview won't be broadly available. Anthropic is keeping it restricted. But it will be available through invitation-only access on the Claude API, Amazon Bedrock, Google Cloud's Vertex AI, and Microsoft Foundry.

This matters more than it seems. Whether you're on Foundry, Bedrock, or Vertex AI, the same dynamic applies: the platform you already run your infrastructure on is now also the platform through which you access frontier security capabilities. The lines between AI platform, developer platform, and security platform are collapsing into a single buying decision.

Your cloud vendor is now your security vendor.

Microsoft's integration is worth calling out specifically because of how they've approached it. Their own security benchmark — CTI-REALM — showed substantial improvements with Mythos Preview. They're not just distributing the model. They're validating it against their own standards. That's a different kind of endorsement than a press release.

For the first time, the platform you choose directly determines what frontier security capabilities you can access — not just how you build, but how well you can defend what you build. Most organizations haven't adjusted to that reality yet.

But here's where it gets complicated. Amazon, Microsoft, and Google — three companies that compete aggressively across cloud and AI — now all have access to the same frontier vulnerability-finding model. Whether that creates an uneven playing field is a question nobody is answering loudly yet. Presumably everyone involved has agreed to use it responsibly — but that assumption gets harder to maintain the further down the distribution chain you go.

Anthropic can vet 12 named partners. They can vet 40 additional organizations. But once Mythos Preview sits inside Foundry, Bedrock, or Vertex AI — platforms with hundreds of thousands of enterprise customers — who is actually vetting the end users? The Glasswing page says participants access the model through these platforms. It doesn't explain how platform-level customers get screened, what happens if a Foundry customer points Mythos at a competitor's infrastructure, or who is liable if the model gets misused downstream.

That's not a reason to dismiss Glasswing. It's a reason to watch how this plays out closely.

Rethinking Vulnerability Management in the Age of AI

Episodic security workflows are no longer sufficient. Security maturity assessments, annual pentests, quarterly scans — they were all designed for a world where attackers needed weeks to operationalize a vulnerability. The results start aging the moment the report is filed. That world is gone. The window is now measured in minutes, which means security has to be continuous — every pull request, every container build, every dependency update. If it's not happening inline with development, it's already outdated. We've already instrumented our own CI/CD pipeline this way, integrating scanning at the PR level so vulnerabilities surface before they ever hit main.

The real problem isn't vulnerability volume — it's prioritization. Most teams are drowning in vulnerability data — thousands of CVEs, endless alerts, constant noise. That's not the problem worth solving. What you actually want is a ranked list of exploitable paths to production, prioritized by runtime reachability, architectural context, and business impact. Not raw CVSS scores. That's the conversation that belongs in your engineering all-hands, not buried in a security backlog.

Vulnerability triage doesn't scale as a manual process. I saw this play out on my own team. We had two high-severity vulnerabilities flagged by Snyk — both valid, both looked urgent. In the old model, that means a senior engineer spending significant time tracing through the code, understanding reachability, and determining whether there's an actual exploit path. That kind of triage is hard. And it doesn't scale.

Instead, we ran both through an AI assistant. Tools like Claude and Codex are increasingly capable of reasoning about vulnerabilities in context — not just detecting them, but analyzing how they could actually be exploited in your specific system. One had a real, exploitable path through our architecture. The other was technically valid but practically unreachable in our codebase. Same Snyk signal. Completely different risk.

That's the shift. It's no longer about finding vulnerabilities. It's about understanding which ones actually matter — and that changes how you allocate engineering attention, which is what vulnerability management is really about.

The next frontier in vulnerability management is AI-native security reasoning — not pattern-matching against known CVEs, but actually reading and understanding your code the way a human security researcher would: tracing data flows, understanding how components interact, and catching complex vulnerabilities that rule-based tools miss entirely. Two of the biggest AI labs shipped their version of this within weeks of each other. Anthropic launched Claude Code Security in February 2026. OpenAI followed with Codex Security two weeks later. Same idea, same direction. When two frontier AI labs independently ship competing versions of the same tool within a fortnight, that's not a trend. That's a transition. We wanted to go further with Claude Code Security on our own codebase, but access is currently limited to a research preview. We've joined the waitlist. If this is a priority for your team, you should too.

AI Security Is Now an Architectural Problem

Project Glasswing marks the point where AI security becomes an architectural concern, not a tooling one.

When models can autonomously discover zero-day vulnerabilities across operating systems, open-source software, and enterprise infrastructure, the problem shifts from detection to response. Most organizations are still optimized for the old model.

Closing that gap requires more than better scanning. It requires rethinking vulnerability management as a continuous, AI-assisted engineering discipline — one that operates at the speed of the threat.

That’s the real implication of Project Glasswing.

Attackers won’t wait for your backlog. Your architecture shouldn’t either.